What Is Your Data’s Star Rating(s)?

The Linked Data movement was kicked off in mid 2006 when Tim Berners-Lee published his now famous Linked Data Design Issues document. Many had been promoting the approach of using W3C Semantic Web standards to achieve the effect and benefits, but it was his document and the use of the term Linked Data that crystallised it, gave it focus, and a label.

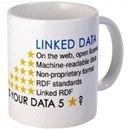

In 2010 Tim updated his document to include the Linked Open Data 5 Star Scheme