Schema.org – Extending Benefits

I find myself in New York for the day on my way back from the excellent Smart Data 2015 Conference in San Jose. It’s a long story about red-eye flights and significant weekend savings which I won’t bore you with, but it did result in some great chill-out time in Central Park to reflect on the week.

In its long auspicious history the SemTech, Semantic Tech & Business, and now Smart Data Conference has always attracted a good cross section of the best and brightest in Semantic Web, Linked Data, Web, and associated worlds. This year was no different. In my new role as an independent working with OCLC and at Google.

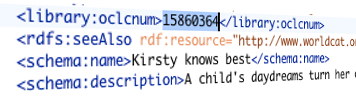

I was there on behalf of OCLC to review significant developments with Schema.org in general – now with 640 Types (Classes) & 988 properties – used on over 10 Million web sites.